Open data in Chicago: progress and direction

In a wonderfully comprehensive overview of Government 2.0 in 2011 up at the O’Reilly Radar blog Alex Howard highlights “going local” as one of the defining trends of the year.

All around the country, pockets of innovation and creativity could be found, as “doing more with less” became a familiar mantra in many councils and state houses.

That’s certainly been the case in the seven-and-a-half months since Mayor Emanuel took the helm in Chicago. Doing more with less has been directly tied to initiatives around data and the implications they have had for real change of government processes, business creation, and urban policy. I’d like to outline what’s been accomplished, where we’re headed and, importantly, why it matters.

The Emanuel transition report laid out a fairly broad charge for technology in his office.

Set high standards for open, participatory government to involve all Chicagoans.

In asking ourselves why open and participatory mattered, we developed the following four principals. The first two, fairly well-established tenets of open government; the last two, long-term policy rationales for positioning open data as a driver of change.

First, the raw materials. Chicago’s data portal, which was established under the previous administration, finally got a workout. It currently hosts 271 data sets with over 20 million rows of data, many updated nightly. Since May 16 the portal has been viewed over 733,201 times and over 37 million rows of data have been accessed.

But it’s the quality rather than the quantity that’s worth noting. Here’s a sampling of the most accessed data sets.

- TIF Projection Reports

- Building Permits (2006 to present)

- Food Inspections

- Vacant and Abandoned Buildings Reported

- Crimes – 2001 to Present (more block-level crime data than any other city, updated nightly)

Here’s a map view of the installed bike rack data set.

As a start towards full-fledged performance management, we launched cityofchicago.org/performance for tracking most anything that touches a resident: hold time for 311 service requests, time to pavement cave-in repair, graffiti removal and business license acquisition, zoning turnarounds, and similar. Currently there are 43 measurements, updated weekly. Here’s an example for average time for pothole repair.

To be sure, all this data can be inscrutable to residents. (One critic of the effort called it “democracy by spreadsheet”.) But the data is merely a foundation, not meant as a end in itself. As we make the publication of this data part of departments’ standard operating procedure the goal has shifted to creation of tools, internally and in the community, for understanding the data.

As a way of fostering development of useful applications, the City joined its data with Chicago-specific sets from the State of Ilinois, Cook County, and the Chicago Metropolitan Agency for Planning to launch an app development competition. Anyone with an idea and coding chops who used at least one of the City’s sets was eligible for prize money put up by the MacArthur Foundation.

Run by the Metro Chicago Information Center, Apps for Metro Chicago was open for about six months and received over 70 apps covering everything from community engagement to sustainability. (You can find the winners for the various rounds here: Transportation, Community, Grand Challenge.)

Here are some of my favorite apps created from the City’s open data.

- Mi Parque – A bilingual participatory placemaking web and smartphone application that helps residents of Little Village ensure that their new park is maintained as a vibrant safe, open and healthy green space for the community.

- SweepAround.us – Enter your address, receive an email or text message letting you know when the street sweepers are coming to your block so you can move your car and avoid getting a ticket.

- Techno Finder – Consolidated directory of public technology resources in Chicago.

- iFindIt – App for social workers, case managers, providers and residents to provide quick information regarding access to food, shelter and medical care in their area.

The apps were fantastic, but the real output of A4MC was the community of urbanists and coders that came together to create them. In addition to participating in new form of civic engagement, these folks also form the basis of what could be several new “civic startups” (more on which below). At hackdays generously hosted by partners and social events organized around the competition, the community really crystalized — an invaluable asset for the city.

Open data hackday hosted by Google

Beyond fulfilling a promise from the transition report, why is any of this important? The overarching answer is not about technology at all, but about culture-change. Open data and its analysis are the basis of our permission to interject the following questions into policy debate: How can we quantify the subject-matter underlying a given decision? How can we parse the vital signs of our city to guide our policymaking?

The mayor created a new position (unique in any city as far as I know) called Chief Data Officer who, in addition to stewarding the data portal and defining our analytics strategy, is instrumental in promoting data-driven decision-making either by testing processes in the lab or by offering guidance for problem-solving strategies. (Brett Goldstein is our CDO. He is remarkable.)

As we look to 2012, four evolutions of open data guide our efforts.

The City-as-Platform

There are a variety of ways to work with the data in the City’s portal, but the most flexible use comes from accessing it via the official API (application programming interface). Developers can hook into the portal and receive a continuously-updated stream of data without manually refreshing their applications each time changes happen in the feed. This changes the City from a static provider of data to a kind of platform for a application development. It’s a reconceptualization of government not as provider of end user experience (i.e., the app or service itself), but as the provider of the foundation for others to build upon. Think of an operating system’s relationship to the applications that third-party developers create for it.

Consider the CTA’s Bus Tracker and Train Tracker. The CTA doesn’t have a monopoly on providing the experience of learning about transit arrivals. While it does have web apps, it exposes its data via API so that others can build upon it. (See Buster and QuickTrain as examples.) This model is the hybrid of outsourcing and civic engagement and it leads to better experiences for residents. And what institution needs a better user experience all around than government?

But what if all City services were “platform-ized” like this? We’re starting 2012 with the help of Code for America, a fellowship program for web developers in cities. They will be tackling Open 311, a standard for wrapping legacy municipal customer service systems in a framework that turns it too into a platform for straightforward (and third-party) development. The team arrives early in 2012 and will be working all year to create the foundation for an ecosystem of apps that will allow everything from one-snap photo reporting of potholes to customized ward-specific service request dashboards. We can’t wait.

The larger implications of platformizing the City of Chicago are enormous, but the two that we consider most important are the Digital Public Way (which I wrote about recently) and how a platform-centric model of government drives economic development. Bringing us to …

The Rise of Civic Startups

It isn’t just app competitions and civic altruism that prompts developers to create applications from government data. 2011 was the year when it became clear that there’s a new kind of startup ecosystem taking root on the edges of government. Open data is increasingly seen as a foundation for new businesses built using open source technologies, agile development methods, and competitive pricing. High-profile failures of enterprise technology initiatives and the acute budget and resource constraints inside government only make this more appealing.

An example locally is the team behind chicagolobbyists.org. When the City published its lobbyist data this year Paul Baker, Ryan Briones, Derek Eder, Chad Pry, and Nick Rougeux came together and built one of the most usable, well-designed, and outright useful applications on top of any government data. (Another example of this, from some of the same crew, is the stunning Look at Cook budget site.)

But they did not stop there. As the result of a recent ethics ordinance the City released an RFP to create an online lobbyist registration system. The chicagolobbyists.org crew submitted a proposal. Clearly the process was eye-opening. Consider the scenario: a small group of nimble developers with deep subject matter expertise (from their work with the open data) go toe-to-toe with incumbents and enterprise application companies. The promise of expanding the ecosystem of qualified vendors, even changing the skills mix of respondents, is a new driver of the release of City data. (Note I am not part of the review team for this RFP.)

One of the earliest examples of civic startups — maybe the earliest — is homegrown. Adrian Holovaty’s Everyblock grew out of ChicagoCrime.org, which itself was a site built entirely on scraped data about Chicago public safety.

For more on the opportunity for civic startups see Nick Grossman’s excellent presentation. (And let’s not forget the way open data — and truly creative hacker-journalists — are changing the face of news media.)

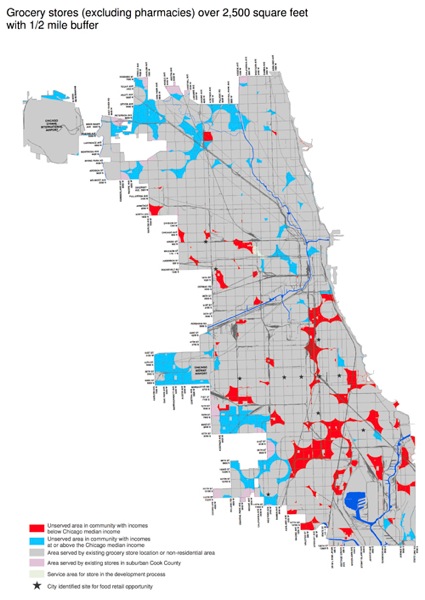

Predictive Analytics

Of all the reasons for promoting a culture of data-driven decision-making, the promise of using deep analytics and machine learning to help us isolate patterns in enormous data sets is the most important. Open data as a philosophy is easily as much about releasing the floodgates internally in government as it is in availing data to the public. To this end we’re building out a geo-spatial platform to serve as the foundation of a neighborhood-level index of indicators. This large-scale initiative harnesses block- and community-level data for making informed predictions about potential neighborhood outcomes such as foreclosure, joblessness, crime, and blight. Wonkiness aside, the goal is to facilitate policy interventions in the areas of public safety, infrastructure utilization, service delivery, public health and transportation. This is our moonshot and 2012 is its year.

Above, a very granular map from early in the administration isolating food deserts in Chicago (click image for larger). It is being used to inform our efforts at encouraging new fresh food options in our communities. This, scaled way up, is the start of a comprehensive neighborhood predictive model.

Unified Information

The same platform that aggregates information geo-spatially for analytics by definition is a common warehouse for all City data tied to a location. It is, in short, a corollary to our emergency preparedness dashboards at the Office of Emergency Management and Communication (OEMC), a visual, cross-department portal into information for any geographic point or region. This has obvious implications for the day-to-day operations of the City (for instance, predicting and consolidating service requests on a given block from multiple departments).

But it also is meaningful for the public. Dan O’Neil recently wrote a great post on the former Noel State Bank Buiding at 1601. N. Milwaukee. It’s a deep dive into the history of a single place, using all kinds of City and non-City data. What’s most instructive about the post is the difficulty in aggregating all this information and the output of the effort itself: Dan has produced a comprehensive cross-section of a small part of the city. There’s no reason that the City cannot play an important role in unifying and standardizing its information geo-spatially so that a deep dive into a specific point or area is as easy as a Google search. The resource this would provide for urban planning, community organizing and journalism would be invaluable.

There’s more in store for 2012, of course. It’s been an exhilarating year. Thanks to everyone who volunteered time and energy to help us get this far. We’re only just getting started.

“Developers can hook into the portal and receive a continuously-updated stream of data without manually refreshing their applications each time changes happen in the feed.”

John, I think until Socrata offers change tracking or you guys add row level update timestamps, this is a bit of an oversell. The Socrata data API isn’t tuned for the large high value datasets like crime or business licenses.

Nevertheless, thank you for sharing your vision. Much has been accomplished in 2011 and I look forward to seeing what the new year brings us.

Joe, fair point indeed. Socrata has been very receptive to our frequent feedback, but there’s clearly a ways to go. We’ll make a point of prioritizing functionality for large and high-value sets.

The city of Chicago has taken Open Data by storm by putting unprecedented amounts of rich and diverse information resources online. The fact that many large datasets are being updated on a daily basis to keep the information current, is just one example of their commitment. Nearly every week we have customers asking us what they can learn from Chicago’s open data efforts and how they can catch up. This is saying something considering other impressive Socrata-powered city initiatives like Seattle, New York City, New Orleans, Baltimore and Edmonton.

We’re working closely with Chicago’s open data team to improve both our software and the consumption experience for their information assets and resources. We’ve recently deployed a host of upgrades to our platform which have improved both query performance as well as the the speed and frequency with which dataset updates can be performed. We’re working together on new, more tightly-integrated update processes which will allow Chicago to perform in-place updates in a fraction of the time.

One of the Big Data problems that we’re constantly optimizing for is how to keep large datasets of upwards of 5 million rows performant with our caching, full-text indexing, and replication technologies while simultaneously allowing real-time query capabilities for people who want to interactively explore and visualize the data.

We’re also developing the next generation of the Socrata Open Data API (SODA 2.0) which features amongst other things a MUCH more expressive and easier to learn SQL-based query syntax, simpler, cleaner data formats, and tons of other developer-focused features. We’ve gone back to the drawing board and rebuilt the API from scratch to focus on the developer and I’m very excited to be close to sharing that experience with others.

The Chicago team has also been very responsive to feedback about the usability of the data itself – I’m sure they’ll take your suggestion about providing row update timestamps to heart and introduce those into more of their datasets – that metadata is already part of the new update processes we’re working on.

We are proud and fortunate to partner with the City of Chicago and other progressive thought leaders in government to make public data easy to access, share and reuse. Keep the comments coming. We very much value the feedback of the Open Data community.

@Chris: thanks for the updates on what Socrata is doing. I think there’s a basic tension between providing interactive access to data and providing it in bulk. I’ll admit I’m pretty indifferent to the interactive features. That doesn’t mean they are a waste of time—just that I’m going to be cheerleading for the other facet.

I’m not totally clear on one thing: you say that update timestamps are part of the metadata in your new process, but also that the Chicago team has been responsive and will take suggestions to heart. I think that means “we’ll have it, but because of our schedule, it wouldn’t be a waste for the city to add it in the meantime.” I guess ultimately the city might be well off including the change data in the dataset anyway, to be completely explicit.

> I guess ultimately the city might be well off including the change data in the dataset anyway, to be completely explicit.

That’s correct, and it’s the strategy we suggest that data publishers use when architecting their dataset. Socrata does maintain our own metadata for when a particular record was created or updated, but for many datasets it isn’t authoritative since it may be some time before the record update finds its way from the system of record to the open data platform. It’s best that the data publisher provide an explicit “Last Updated” field so that its very clear when the update occurred.

As I understand it, that’s the plan for the Crimes dataset and for other datasets where that metadata would be useful.

This is such a great post it just made me miss my stop on BART. Damn.

“The promise of expanding the ecosystem of qualified vendors, even changing the skills mix of respondents, is a new driver of the release of City data.”

This is really the next phase of this movement, building real sustainable businesses on this data. Cue my throw to the Code for America Accelerator we’re launching this year.

Thanks for the great post.

“reconceptualization of government … as the provider of the foundation for others to build upon”

This is a trend beyond government. Hurwitz & Associates recently published the results of a survey that found the top five technical motivations for offering an API to be: application integration, collaborate with partners, connect to more devices, speed external innovation, and enable outsourced innovation. Top five business motivations: connect to more partners, expand channel strategy, expand reach, compete more effectively, and increase top line revenue.

(Source: http://www.hurwitz.com/index.php/component/content/article/388-hurwitz-a-associates-announces-the-results-of-recent-web-api-study)

As for the existing API (SODA), I don’t think any app in the A4MC contest used it. If the app used the open data, I think every one of them pulled down a copy to use. Keep talking to Socrata about a revision.

At least one A4MC app used SODA. Crime Alert http://www.crimealertchicago.org/ gets the latest updates from the city database daily using SODA. It was a real pain to set up a SODA query on a date range though, so I wrote a helper app that anyone can use: http://www.socrataquery.org/ I understand Socrata is working on a better API now.

John,

Great post. I am excited to see what will come from the city and the entrepreneurial mind being growing in Chicago!

As a side note, my name was misspelled. Any chance we can remove this Char guy and replace him with me? 😉

John a fascinating post. I hope to participate in your online forum on 1/5. In my own blog (above) I have begun to speculate on the transformation of citizen mentalities and expectations for government services with the advent of digital media and other technologies (robotics). I suppose it is just possible that as citizens are more and more capable of quickly reporting on issues in their neighborhood and monitoring the response of government to those issues, a revolution of rising expectations could ensue. In other words, citizens expecting more than government can actually accomplish especially in a situation of budgetary constraint. The fact is that we really don’t know how such technologically driven changes could change attitudes towards and expectations of government.

John the discussion on Personal Democracy Forum was fascinating.

John, thanks for this excellent synthesis and framing piece. Especially:

“Beyond fulfilling a promise from the transition report, why is any of this important? The overarching answer is not about technology at all, but about culture-change. Open data and its analysis are the basis of our permission to interject the following questions into policy debate: How can we quantify the subject-matter underlying a given decision? How can we parse the vital signs of our city to guide our policymaking?”

and

“The City-as-Platform”

“It’s a reconceptualization of government not as provider of end user experience (i.e., the app or service itself), but as the provider of the foundation for others to build upon. Think of an operating system’s relationship to the applications that third-party developers create for it…This model is the hybrid of outsourcing and civic engagement and it leads to better experiences for residents. And what institution needs a better user experience all around than government?”

Posts like this just exacerbate that annoying foundation-staffer tendency to press already-overworked people to find more time to write 🙂

John

Great summary of what Chicago has done and where it’s going. Looks like an exciting year for people interested in improving cities. Thanks!

Nice link to the music too!

John, this is amazing. As part of IBM’s Corporate Service Corps, we dug into the Open Data Concept for the Kenyan Government. On our closed space facebook group for our team, someone posted your blog. Keep the message going and it’s inspirational.

Michelle