Ghost Ecosystems

Bubbling this old post to the top given some recent chatter around using traditional indicators of urban decay — trash, graffiti, boarded-up windows — to make inferences using predictive modeling (and, of course, AI). As a system for understanding future municipal resource deployment for spot-fixes and infrastructural interventions — and decisively not to resurrect discredited theories suggesting these indicators encourage crime — this makes a lot of sense (enough for me to spend several years of my professional life on it).

But as a wider lens into the systemic causes of decay and ultimately ecosystem collapse, like of an entire town or species as the post below jauntily tours, it misses the forest fire for a few fallen trees. AI of course is being used in many different scientific domains to map deeper foundational, existential threats, but given where we are in the hype curve with LLMs there’s a lot of money (and attention and compute cycles and energy consumption) going into more surface-level predictive tech. This is probably because it’s a lot easier to fill a pothole than move a city off of fossil fuels.

Speaking of fossils, here’s the old post.

I teach a course at CU Denver on urban technologies where, to the bewilderment of most students, we begin by studying a decrepit urban typology somewhat unique to the American West: ghost towns. Where students are expecting robot cars and sparkling sci-fi skylines they get depopulated ruins and crumbling foundations. It takes several sessions before students appreciate why we start this way. The afterlife of towns and cities exposes quite a bit about why they were created, what assumptions they were built upon, and what larger systems they are enmeshed in. If these towns are ghosts, how exactly did they die?

Shiver Me Timbers

Prefer to listen to this post? (It’s really me, not AI!)

Greetings travelers! Do you remember what you were doing on September 19 last year? Perhaps, like me, you were avoiding any co-worker, friend, or family member enthralled by the very 1990’s Talk Like a Pirate Day parody holiday. You know these people, texting you “Ahoy Matey!” and “Arrrr!” and “Avast, me hearty!” This was humorous once, maybe, in 1997. Why do people do this? Aren’t pirates horrible people — thieving, conniving, ruthless people? There’s no Talk Like A Serial Killer Day, obviously and thankfully. On today’s itinerary we’re going to be heading back out on the Seven Seas to get to the dark heart of piracy and how it has been depicted in horror cinema in a segment called Shiver Me Timbers.

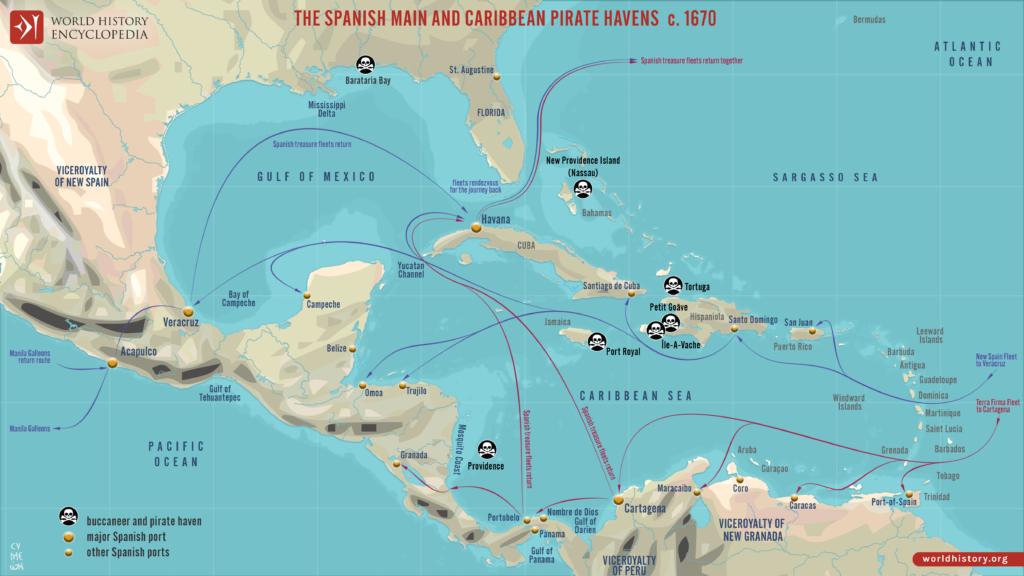

Yes, tourists, we’ve been here before … or at least near here, exploring underwater horror on itineraries called Doom Shanty and Maybe Shark, but today we’re looking at the real history of piracy. International maritime law defines this as “any illegal acts of violence or detention, or any act of depredation, committed for private ends by the crew or the passengers of a private ship or a private aircraft” outside the jurisdiction of any State, such as on the high seas. And, indeed, piracy continues to this day, primarily in the Indian Ocean, the Gulf of Guinea in West Africa, and some of the seas surrounding Malaysia and Indonesia. But these pirates — equipped with GPS, rocket-propelled grenades, and very fast boats — are not what we think of when we conjure the image of pirates.

Most of our modern fictional depictions of pirates take their inspiration from what is called The Golden Age of Piracy which lasted from the 1650s to the 1730s. More precisely, pirates in today’s culture have almost exclusively been filtered through just three 19th century characters: Captain Hook from Peter Pan, Long John Silver from Treasure Island, and Sinbad from Sinbad the Sailor. These tales, centered mostly in the Caribbean, present pirates as adventure-seeking swashbucklers, dirty and rum-sodden, causing trouble for European navies, but generally just using the ocean as a lawless crossroads of mercantile opportunity during a period when shipping to and from Europe’s colonial holdings in the New World were reaching their apex. Put another way: pirates were thieves on ships who would board vessels laden with cargo to take it.

Were pirates criminals? Technically yes, though the concept of international law governing activity outside of boundary waters did not really exist. Complicating the ethics here is the fact that, though much piracy (technically buccaneering) was waged against state powers, later phases of piracy evolved into privateering where European states officially sponsored pirate raids on competing powers. What started as motley crews of independent criminals eventually became official sorties licensed by “letters of marque” permitting and financially encouraging robbery to hobble state adversaries. So basically whether you consider pirates criminals or not it comes down to what you consider worse behavior: theft by an underclass waged against state power or theft by an underclass waged on behalf of a state power. Either way, pirates were pretty cool, even if they did some horrible things.

Most of these horrible things were done in service of finding the most valuable cargo or hidden loot. But the acts themselves were useful in creating a reputation that would enable the taking of frightened merchant ships without a fight. This reputation is one of the reasons we have so much historical documentation of pirate atrocity. Take the torture of merchant captains and crew. Techniques included “woodling” — tying a rope around a victim’s head and tightening it with a metal bar until his eyes popped out. Or lighting slow-burning rope ends between a captive’s fingers and toes and in their beards. Or having salt or brine poured into the wounds caused by whipping. Extreme tortures involved bodily mutilation, including forcing the victim to eat their own or others’ body parts. (We can look to the notorious pirate Edward “Ned” Low for that one.) While simply working on board a sailing vessel was a threat to one’s limbs — mind those rigging lines and anchor chains! — it’s no coincidence that the stereotype of pirates that comes down to us usually involves missing body parts: hooks replacing hands, wooden pegs replacing legs, patches over missing eyes, and nothing replacing teeth displaced by a perfidious (and undiagnosed) lack of Vitamin C.

And then there were pirate executions — punishment both for misbehaving pirate crewmen, but also for merchant captives. Alas, this almost never involved making anyone walk a plank. Why be dramatic about drowning someone when you could just toss them overboard? At gunpoint or chained to a cannonball, if need be. Like torture, pirate executions’ larger purpose was psychological: instilling fear in others was easily as important as the act itself. The most spectacularly awful form of pirate execution had to be keelhauling, where a victim was tied to a rope, thrown in the drink, then dragged under the ship, usually from one side to the other. Before drowning the unfortunate human anchor would usually be shredded by the barnacle-encrusted wooden hull of the ship. Less dramatic but better fodder for future fictional tales was marooning, where treasonous or thieving crewmates were left on a deserted island, usually with a bottle of water and a pistol. Die of thirst or just take your own life. Let’s not forget flogging by the fearsome cat o’ nine tails, a technique called “sweating” a prisoner by making them run around the mast while being stuck with swords and daggers by the whole crew, and of course just burning a ship full of sailors wholesale. Or, perhaps worst of all, simply enslaving all captives and keeping them in bondage or selling them at one of the dozens of colonial holdings desirous for manual labor.

It’s pretty obvious how the lore of piracy would make good material for a horror movie. And yet, pirate horror is a relatively small sub-genre, a couple dozen pictures generously. This might be explained by the fact that modern depictions in film have largely portrayed them as, at worst, antiheroes and often as merely roguish protagonists. Depraved as their documented historical actions might have been, we seem to identify with and even valorize pirates, idolizing their freedom from allegiance, their flaunting of law, even or especially their throwing a wrench into the cogs of capitalism at the very birth of capitalism. Interestingly we can thank Disney both for the sanitizing of the pirate character in modern depictions and for a revival of pirates as undeniably scary. With the former, think of the bumbling Captain Hook in 1953’s Peter Pan or the family-friendly cast of treasure-seeking rogues in Disney’s Pirates of the Caribbean theme park attractions. For the latter, look to the first Pirates of the Caribbean film The Curse of the Black Pearl, from 2003. Featuring a legitimately menacing crew of undead pirates condemned to walk (and swim, though they actually walk along the sea bottom) for eternity and a Davy Jones whose writhing beard of octopus tentacles is almost more haunting than Medusa’s locks. This film, while firmly an action adventure piece, reminded viewers just how scary pirates could be.

But there is a tradition of pirate horror and, travelers, it calls to us as clearly as a bottle of uncorked rum. Let’s take a swig.

1962 brought us the Hammer Productions period piece called Captain Clegg (also known as Night Creatures). Peter Cushing here plays a village parson named Blyss who presides over a coastal congregation when the Royal Navy led by a Captain Collier arrives to investigate reports of alcohol smuggling. This village is purportedly the final resting place of the pirate Nathanial Clegg, but it is terrorized at night by horseback-riding skeleton-ish figures called “Marsh Phantoms”. This film has plenty of pirate ambience, though none of it takes place at sea and there’s nothing supernatural about any of it. To try to get to the bottom of the phantom riders a former crewmate of Clegg’s, muted through torture by the captain, exhumes his grave, finding it empty. Eventually — spoiler! — we come to learn that Parson Blyss is in fact Captain Clegg, spared at the last moment by his executioner and that the phantoms are merely villagers meant to run off out-of-towners. Captain Clegg is an atmospheric film, adequately creepy, if maybe a little tame in the final frights and gore tally.

Do you consider Halloween to be John Carpenter’s masterpiece? You’re probably right, but there are film buffs out there who would argue with you, pointing to 1980’s The Fog as a superior film. The cast itself is a pantheon of horror icons already established and soon-to-be: Adrienne Barbeau, Tom Atkins, Jamie Lee Curtis, her mom Janet Leigh, Hal Holbrook, and even John Houseman. The conceit of using a fog rolling into a coastal town as both a metaphor for encroaching evil and a literal atmosphere of low visibility and dread is a masterstroke that has been repeated multiple times since. The story here, helpfully narrated by Houseman to children sitting around a campfire at the film’s outset, details the crashing of a clipper ship which mistook a campfire for a lighthouse. All its crew drowned. Meanwhile, we learn the real reason for the crash was that locals 1) did not want the leprosy-addled owner of the boat to establish a colony nearby and 2) they wanted his gold. The town, you see, is founded on a lie. Enter the fog, glowing ominously at first out at sea and slowly coming ashore. Barbeau here plays a radio DJ broadcasting from a lighthouse who serves as a kind of omniscient lookout for the townsfolk as the fog slowly reveals the undead sailors come back to exact vengeance. Specifically they come looking for six victims to match the six original local conspirators. The film ends with five deaths, though, so you know there’s an epilogue. This is a great movie. The fog itself I find far scarier than the avenging leper-pirates. It’s used especially well inside the church and around the lighthouse at the film’s climax, hiding basically motionless killers. (Sidenote: the blocking in these scenes was obviously and effectively re-used by Carpenter in the interior scenes of Prince of Darkness.) I love The Fog and you should too..

The true sign of the establishment of a theme in a horror sub-genre of course is whether there are any examples of truly awful films based on it. We have that, my travelers, we definitely have that. I present to you Jolly Roger: Massacre at Cutter’s Cove from 2005. This is made-for-TV quality shlock that starts promisingly enough — on a beach, around a campfire, with all the trappings of a slasher. Canoodling teens find a treasure chest just sitting on the beach, they open it, and an undead pirate appears. It’s not much more complicated than that and let me say I was all in. But then, after an initial mini-massacre, the story moves to the city and becomes a police drama. You see, the really-not-very-jolly pirate named Roger has returned to avenge his keelhauling death at the hands of his mutinous crew. To do this he wants sixteen heads of the descendants of this crew, all of whom helpfully live at Cutter’s Cove. Look, if you like decapitations by cutlass this is the film for you. Nearly all the deaths, gory as they are, are decapitations. The evil pirate is pretty great, honestly, looking more like a zombie than the swashbuckler he might once have been. I would have preferred if this film stayed a straight slasher. Dead men may tell no tales, but undead men sometimes tell tales you wish were better tales. Oh well.

I’ve put together a list of side excursions you can undertake on your own — no charge — if this short jaunt is not enough for you all. If you’re a fan of Tombs of the Blind Dead (and who isn’t?) you will like The Ghost Galleon from 1974. If you’re more into modern day pirates engaged in terror see The Island from 1980 or Deep Rising from 1998, a creature feature masquerading as a pirate film (and underloved, in my opinion). You know where you might get the most undead pirates for your buck? 1998’s Scooby-Doo on Zombie Island. I’m not kidding. Don’t laugh. Watch it and tell me I’m wrong. Prefer the sub-genre of reality film horror? Check out CrossBones from 2005. Basically Survivor but with ghost pirates running about. Maybe the most straight-up pirate horror out there, which is to say the film featuring the most stereotypical pirate stuff in a horror storyline is Curse of the Pirate Death from 2006. If you are actually a fan of Talk Like A Pirate Day: 1) watch this film and 2) do not contact me on Sept. 19. The most recent entries in this category Pirates of Ghost Island, Dark Waves, and Ship of the Damned are all variations on the same theme: what happens when you come between undead pirates and their booty. All of which raises an interesting point: where is the pirate horror set during actual pirate times several centuries ago? With the exception of Captain Clegg, I could find none. Filmmakers, contact me. I have ideas.

Well that’s it, mateys. Whoops I did it. That’s it, my traveling companions. Time to flee like bilge rats. Until our next journey, mind your step on the wet decks and make sure you eat lots of citrus. Thank you!

A full list of the movies mentioned above can be found at Letterboxd. Find out where to watch there.

The Terror Tourist is my occasional segment on the Heavy Leather Horror Show, a weekly podcast about all things horror out of Salem, Massachusetts. These segments are also available as an email newsletter. Sign up here, if interested. The segment begins at 19:03 in this episode:

Intolerable calamities

Walked through one of the four cemeteries in my neighborhood today. Among many heroes of the American Revolutionary War interred here is Reverend Moses Mather. He served as a Patriot in the war, but is most remembered for being snatched out of his church mid-sermon by the British and imprisoned on Long Island for many months before his release in a prisoner exchange. The headstone reads “Death is a debt to nature due which I have paid, and so must you.”

Here’s a section that caught my eye from his essay America’s appeal to the impartial world:

These unheard of intolerable calamities, spring not of the dust, come not causeless, nor will they end fruitless. They call on the Americans for repentance towards their maker, and vengeance on their adversaries. And can it be a crime to resist? Is it not a duty we owe to our maker, to our country, to ourselves and to posterity? Does not the principle of self-preservation, which is implanted by the author of nature in the human breast, (to operate instantaneous as the lightning, resistless as the shafts of war, to ward off impending danger) urge us to the conflict; add wings to our feet, firmness and unanimity to our hearts, impenetrability to our battalions, and under the influence of its mighty author, will it not render successful and glorious American arms?

It was not a crime to resist in 1775. It is not a crime to resist now.

Phobos Ex Machina

Prefer to listen to this post? (It’s really me, not AI!)

Greetings, my travel buddies! I was reviewing our pre-trip paperwork and I notice that not all of you have filled out your required forms. Specifically, please reference the sheet where you’re asked to list your phobias. This is important, especially when traveling in new places. Those of you suffering from any of the following phobias are advised to contact me personally to discuss: claustrophobia (fear of enclosed spaces), acrophobia (fear of heights), amaxophobia (fear of being a passenger), or emetophobia (fear of getting sick abroad). So many phobias. So much to fear.

To thank for all this, at least linguistically, we have Phobos, son of the Greek god of war Ares and the goddess of love Aphrodite. Lion-headed Phobos is the personification of fear and panic, emotions felt in both love and war. My tourists, this week we’re picking up where we left our last overseas adventure on the Mediterranean. We’re headed from Albania southeast to the land of Greece, both ancient and modern, in a segment I call Phobos Ex Machina.

It cannot be overstated how much the history of drama is rooted in ancient Greece. Dante, Shakespeare, Mary Shelley, T.S. Eliot, Arthur Miller — all indisputably geniuses, but even they would admit to reworking tropes hammered on the anvils of Greek dramaturgy. Themes like hubris, where a character’s excessive pride becomes their undoing; or the question of whether we can act of free will or if our lives are fated to happen a certain way; or the idea of supernatural retribution for human transgression; even family curses, that bedrock of so much 19th century American fiction, come from Greek myth.

Look, the Greek civilization invented tragedy. And I can’t think of a single tragedy that does not involve death in some important way. It’s an inevitability. This doesn’t make all Greek tragedy horror, at least by our modern definition, but there is a lot that is straight-up horrific in the stories passed down through the millennia.

Let’s see some sights before we journey to film.

Some versions of Greek myth attribute the creation of humans to the Titan Prometheus. As if making inferior beings weren’t enough of an affront to the Olympian gods he then steals their fire so that humans may be warmed by and cook with it. This pisses off ol’ Zeus who decides not to kill Prometheus, but to lash him to a rock where an eagle comes daily to rip out his liver (the locus of emotion in the thinking of ancient Greeks). You’d think this disembowelment would soon lead to Prometheus’s death and an end to the torture. But Zeus thought of this and condemns Prometheus to re-grow his liver each night so that it may be pecked out again the next day. Guy just wanted to give humans a little heat and light. It’s brutal.

OK step off this liver-stained rock over here and let’s talk about Cronus, youngest of the Titans, who upon overthrowing his parents, Uranus and Gaia, learned that his children would also become patricidal maniacs. Cronus sires most of the cool Olympian gods — Demeter, Hera, Hades, Poseidon — but fearing for his life he swallows them all whole at birth to prevent their possible future actions. His baby-mama Rhea eventually decides she’s had enough and decides to deliver her last child, a kid named Zeus, and secretly on the island of Crete, swaddling a stone — and in some versions “nursing” it to prove it is a baby, which sprays milk all over the place creating The Milky Way — and presenting it to Cronus who promptly swallows it. Zeus grows up in secret, eventually confronts Cronus with an emetic which makes him disgorge all the sibling gods (and the rock), and ends up overthrown per prophecy to be imprisoned in Tartarus. The lesson here: cannibalizing your family is a bad idea in almost all circumstances. Write that down.

Avert your gaze this-a-way for the story of Medusa — horrific in a bunch of different ways. Maybe more troubling than her eventual snaky form is that she really didn’t do anything to deserve her fate. Medusa was just a beautiful Greek human, minding her own business, who attracts the attention of Poseidon, who rapes her. He does this for some reason in Athena’s temple. Athena is enraged at this desecration and, in an act of incredible injustice, blames Medusa and punishes her by transforming her into a hideous Gorgon: hair replaced by writhing serpents, skin turned green, and a gaze that turns any who look upon her to stone. Even in death she suffers indignity with Perseus using her severed head as a weapon before embedding it directly on the shield of Athena. And you know who suffers not at all for any of this? That sex pest Poseidon. It’s no wonder Medusa is a modern feminist icon.

Travelers, it’s possible you don’t know the story of King Erysichthon. Arrogant, greedy, and not at all an environmentalist, Erysichthon ordered his men to chop down trees all over his kingdom. They comply but refuse to cut down one tree covered with offerings to Demeter. So Erysichthon grabs the ax and does it himself. Bad idea, for this tree is a wood nymph, who in her dying breath curses Erysichthon to a life of eternal hunger. The more he eats, the hungrier he becomes. He eats through all the kingdom’s resources, eventually selling his daughter for food. Finally, with nothing left to eat, he begins to devour his own flesh. If you’re hearing both J.R.R. Tolkien and Stephen King in this tale, well, that’s the seemingly endless echo of Greek horror.

It would be difficult to find a modern horror movie that doesn’t have some precedent, conscious or not, in Greek mythology. This week’s group watch film, Pig Hill, for instance, brings to mind the sorceress Circe and her transformation of Odysseus’s men into swine for invading her home. You could argue that these echoes are a function of there only being so many stories and allegories of the human condition — and that the Greek’s got there first, at least in the Western canon. Though these are not sequels in any narrative sense tracing the evolution of ideas, like pig-men, through the centuries is itself an insight into how human behavior changes or doesn’t. Circe turned men to pigs because they were acting like pigs.

Given this lineage it might be a fool’s errand even to talk about Greek horror films. It’s all Greek to us in some ways, but there are some films in the last century which are especially so. Let’s sprint through this marathon, shall we?

I would be kicked off this podcast if I did not begin this race with The Gorgon from 1964. Directed by Terence Fisher, starring Christopher Lee, Peter Cushing, and Barbara Shelley, The Gorgon tells the story of a village in pre-war Germany terrorized by a series of murders that result in victims turned to stone. What it is not is a retelling of the Medusa myth per se, merely using her petrifying power/curse as the killer. And what a great killer she is. A threat that cannot be viewed — which kills simply by being seen — is almost postmodern in its symbolic freight. I’m pretty sure Fisher understood what he was working with. The film opens with a man painting his nude girlfriend literally sitting motionless under his male gaze. The gorgon possesses the reversal of this power, the ability to stop male desire and action. One major difference between the myth and this film is that the gorgon can somehow revert to her human form, making her all the more elusive and dangerous. This film is everything you’d want from a Hammer production in full gothic mode. Except maybe the actual gorgon, called Megaera here, whose snake-filled wig is underwhelming even for the effects of the time. Christopher Lee is noted as saying “The only thing wrong with ‘The Gorgon’ is The Gorgon!”

1976 brought us good campy Greek horror in the film Island of Death, written and directed by Nico Mastorakis, is called one of the most widely banned films in the world — and I now understand why. Its tagline is “The lucky ones got their brains blown out!” Two British sexual perverts travel to the island of Mykonos for a killing spree. This film starts with the rape of a goat, proceeds to a crucifixion, dangling a man from an airplane and flying it into the sea, decapitation by bulldozer, a toilet drowning, a death in a pit of lye … and to top it all off, an incestuous twist. Wow this film. 50 years later it still seems outrageous.

Taking our journey slightly closer to the actual country of Greece is the Video Nasty usually known as Anthropophagus from 1980, an Italian production from Joe D’Amato and George Eastman. Anthropophagus is the story of a group of tourists stranded on a small Greek island and stalked by an especially hungry and especially gory cannibal. Said cannibal, the titular anthropophage, is himself a shipwreck survivor who years ago was forced to eat his son and wife to stay alive. Though this movie is a pacing train wreck it is an important film in the evolution of gross films as it features an infamous scene where the killer strangles a pregnant woman then feasts on her fetus. You have to give this guy one thing, like Erysichthon, he’s ravenous to the end eventually eating his own entrails when he takes a pickaxe to the gut.

Greek horror in the 80s went creature feature with Blood Tide, featuring James Earl Jones, and full slasher in The Wind starring Meg Foster. How about underground Greek art horror? Got that in 1990 with Singapore Sling: The Man Who Loved a Corpse. This movie basically is every genre all at once, though from this description you’d be forgiven for not believing it was a comedy.

Evil, the first Greek zombie film from 2005, is known to film nerds as “My Big Fat Greek Zombie Apocalypse”. And that’s pretty much all it is known for. We also have Guilt from 2009, a Cypriot fusion of historical horror (mostly about the invasion and division of Cyprus in the 70s) with psychological horror. Greece seems a perfect setting for folk horror and we finally get that in 2019 with the slow, too-long Entwined. The final stop on our tour is the recent Its Name Was Mormo from 2024, a Cypriot found footage tale of a family bedeviled by an ancient demon and presented through a police reconstruction of events.

So basically, though Greek horror films are not many, the industry has experimented in most of the major sub-genres. There are some omissions: as central as the Mediterranean is to the culture and history of Greece you’d think there would be be more sea-based horror. (Only Blood Tide makes this central.) Also, being so close to Italy you’d think there would be a lot more Greek gialli, which does not seem to be the case. As far as I can tell there is no endemic or particular Grecian flavor of horror. But then again, so many of our modern monsters, themes, and even methods of killing were presaged eons ago in Greek drama. So maybe they are just taking a well-deserved break.

And now it is time for us to take a break, travelers. I hope this did not trigger any of your latent, undocumented phobias. But if it did you now know who to blame. Until next time!

A full list of the movies mentioned above can be found at Letterboxd. Find out where to watch there.

The Terror Tourist is my occasional segment on the Heavy Leather Horror Show, a weekly podcast about all things horror out of Salem, Massachusetts. These segments are also available as an email newsletter. Sign up here, if interested. The segment begins at 9:22 in this episode:

Terror Tourist … Traps!

Prefer to listen to this post? (It’s really me, not AI!)

Greetings, travelers! Today we’re going to do something a little different in the interest of keeping everyone safe and happy. Think of it like the pre-flight briefing when you get on an airplane: the journey will begin just after we tell you how not to die. My subject today is not quite so grim, though the movies which exemplify it certainly are, but it is just as important. Now, if I said just the word “tourist” to you what would naturally be the next term that comes to mind? Guide, destination, bus? NO. Today, my friends, we’re re-titling this segment entirely as we cover Terror Tourist .. Traps!

Like obscenity you know a tourist trap when you seen one, but the most common types of traps — restaurants, shops, and attractions — are often perfectly lovely and non-trappy. So we should define the term more specifically. An economist would describe a tourist trap as an opportunity (or threat, depending on who you are) that derives from information asymmetry — that is, a situation where one party has more information than the other. In every case that’s relevant today the two parties here are tourists (us!) and the establishments that grow up around or on the way to major destinations and sights that tourists desire. What this means in practice — how the trap is laid — is when restaurants, shops, and attractions prey upon the lack of information the tourist possesses of a product’s or service’s true quality, actual value, or local authenticity. Statistically speaking as a visitor to a new place you as a tourist will not have the contextual information that the seller does. Add to this imbalance that tourists are often tired, hungry, and/or unfamiliar with local currency, language, and culture. This only increases the precarity of the imbalance.

Why, you may be asking yourself, are we using economists’ definition here? Seems so boring and academic, right? Well we sorta have to use it because — and here’s the twist — like any market-driven phenomenon tourist traps only survive if people pay for their products and services. And survive they do. PEOPLE LOVE TOURIST TRAPS. In fact, many people would not rate a destination highly if they did not come festooned with souvenir shops and familiar chain eateries. Las Vegas for example is what happens when an entire town becomes a tourist trap, but that may be a subject for another itinerary. Travelers, may our companions never include people like this, but they most certainly do exist, I am sorry to say.

Usually it isn’t the major sight or attraction that is the trap, but the ecosystem of hucksters that grow up around it, so many slimy-sharp barnacles on an otherwise beautiful ship hull. (I say usually because of course there are counterexamples. Plymouth Rock, for instance, is both the destination and the trap. Spoiler: this is not where the pilgrims landed. If you believe it is boy do I have a story to tell you about Thanksgiving.) But more often than not the sight or destination is just that, a sight: Mount Rushmore isn’t a trap in itself (that is, if you like white imperialist aspirations defacing native land and natural beauty); the intersection of Broadway, Seventh, and 42nd Street in New York City isn’t any more of a trap than the numerous other confusing intersections in the metropolis; The Hollywood Walk of fame is, well, it’s just a sidewalk. In each of these cases it isn’t the thing you’re coming to see that’s the problem; it’s all the ancillary operations that set up adjacent to or on top of it expressly to take your money. Put another way: it isn’t the cheese that makes the mouse-trap it’s the spring-loaded metal hammer bar.

But another way to define a tourist trap — and frankly the one that troubles me most — is to look at destinations where the tourists themselves congeal to form the “trap”. This is the phenomenon of over-tourism, supercharged during the era of social media. Think of the Trevi Fountain in Rome, the Red Light District of Amsterdam, or even the geysers of Yellowstone National Park. While many of these will be accompanied by certain kinds of trappy vendors, the biggest trap is the other people there just like you. Too many of us. Babies crying, elderly moving too slowly, jerks not staying on marked paths, weird eddies in the flow of humanity caused by people trying to frame the perfect photo, and just the sheer amount of people burdening infrastructure that was never intended for the swarm of people. To smoosh together insights from Dorothy in Oz and Jean-Paul Sartre: hell is not where you’re go, it’s who you meet along the way.

Tourist traps are a global phenomenon. Like so many things gross things, America did not invent tourist traps, but hoo boy have we perfected them. And that I think is because we figured out how to spring traps at any scale and, frankly, anywhere. Sure, there are the obvious places for traps — scenic overlooks of natural grandeur (like The Grand Canyon), or sites associated with conspiracy or mystery (like Roswell, NM) or sites that promote a kind of shared national or regional myth-making (like The Alamo). But the real tourism innovation in the United States — and the set-up of so many horror movies — is that we figured out how to lay traps along the way in the middle of nowhere. America invented roadside attractions, places that make you stop on a journey and literally become a tourist even if you were not one when you set out. Because how could you not stop to see a collection of taxidermied two-headed calves? Especially when there’s been no other thing to see (or place to relieve yourself) in the previous 100 miles or will be in the next 100. The informal marketplace of oddities and discarded Americana may be the most important evolution of tourist traps in the 20th century. And this could not have happened without three things that make the US unique: 1) an insatiably consumerist public; 2) a love of the automobile; 3) vast distances — especially in the middle of the country — where there is nothing of note to see along the journey.

In planning the swerve to talk about movies in this segment I considered many paths. Even casual horror movie audiences could point to the sub-genre of trap-based films like the Saw series, the Cube series and the dozens of films inspired by escape rooms or even Halloween haunt attractions. But these aren’t tourist traps exactly. Going the other direction there’s the path that simply features tourists being dumb or naive. These are the subjects of films like Midsommar, Hostel, The Hills Have Eyes, or even The Rocky Horror Picture Show.

While not exactly traps gas stations, especially when there’s nothing else around, are central to so much of the horror canon, being featured prominently in the best and worst of the genre: The Texas Chain Saw Massacre, Maximum Overdrive, Friday the 13th, and countless films where a grizzled eccentric grumbles to teenagers that they shouldn’t go down whatever road. This feels close to tourist trap horror, but it isn’t exactly. In the same way films like Motel Hell and Hell Fest are adjacent by featuring things you find associated with tourist traps — in this case seedy hotels and carnivals. But, again, not exactly tourist trap films per se.

There are certainly films that whose settings are literally roadside attractions where kitsch turns deadly. For example, the main characters in House of 1000 Corpses are on a mission to write a book about offbeat roadside attractions when they are forced by a flat tire to visit a particularly gruesome one, falling right into the trap of Captain Spaulding.

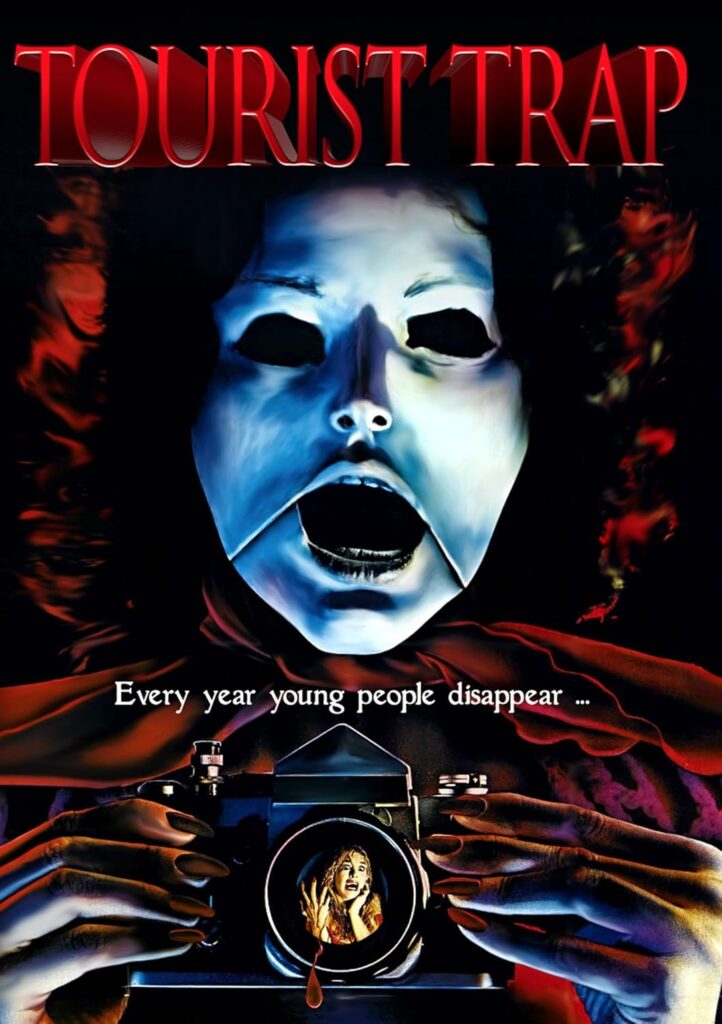

Luckily, we have a film from 1979 that must certainly fit the bill: David Schmoeller’s Tourist Trap. Known largely because it somehow received a PG rating and could be shown more widely than other films of its ilk (and also because of its striking VHS cover art), Tourist Trap continues many themes and tropes sealed up in earlier decades by the numerous remakes of House of Wax. Our teenagers here stop with a broken-down car at Slausen’s Lost Oasis, a roadside museum (and also gas station) full of animated mannequins run by the miscast but still decent Chuck Connors as Mr. Slausen. He’s welcoming enough, explaining how his brother created all the mannequins but that he went to pursue his career in the big city. He also relates that the creation of the interstate system basically bypassed his tourist attraction (a theme you’ll remember from Psycho) which is why it is so run-down. As Slausen offers help with the car various of course eventual victims discover that the mannequins are animated by more than gears and levers. There’s something supernatural going on, as objects move by themselves and the mannequins off the crew one by one. But there’s also a masked human killer stalking around killing some by plastering them alive, creating mannequin corpses for display. Slausen thinks it must be his brother. Travelers, it is not Slausen’s brother … which is why he wears a mask. Tourist Trap gives us a closing shot of a final girl, gone completely insane and continuing on her roadtrip with her original set of friends now rotting beneath their mannequin dressing. This is decent film for its time, calling back to classics of the horror genre but also firmly a part of the slasher renaissance. It even throws in some psychokinetics, a sub-theme of the 80s. But if we’re being honest, it’s not a tourist trap — even if our tourists become trapped. The protagonists of this tale stop because their car breaks down. And other than claiming the Lost Oasis was once a museum that’s not the nature of the destination anymore. He’s just a crazy old man with a gruesome collection. Cool title though!

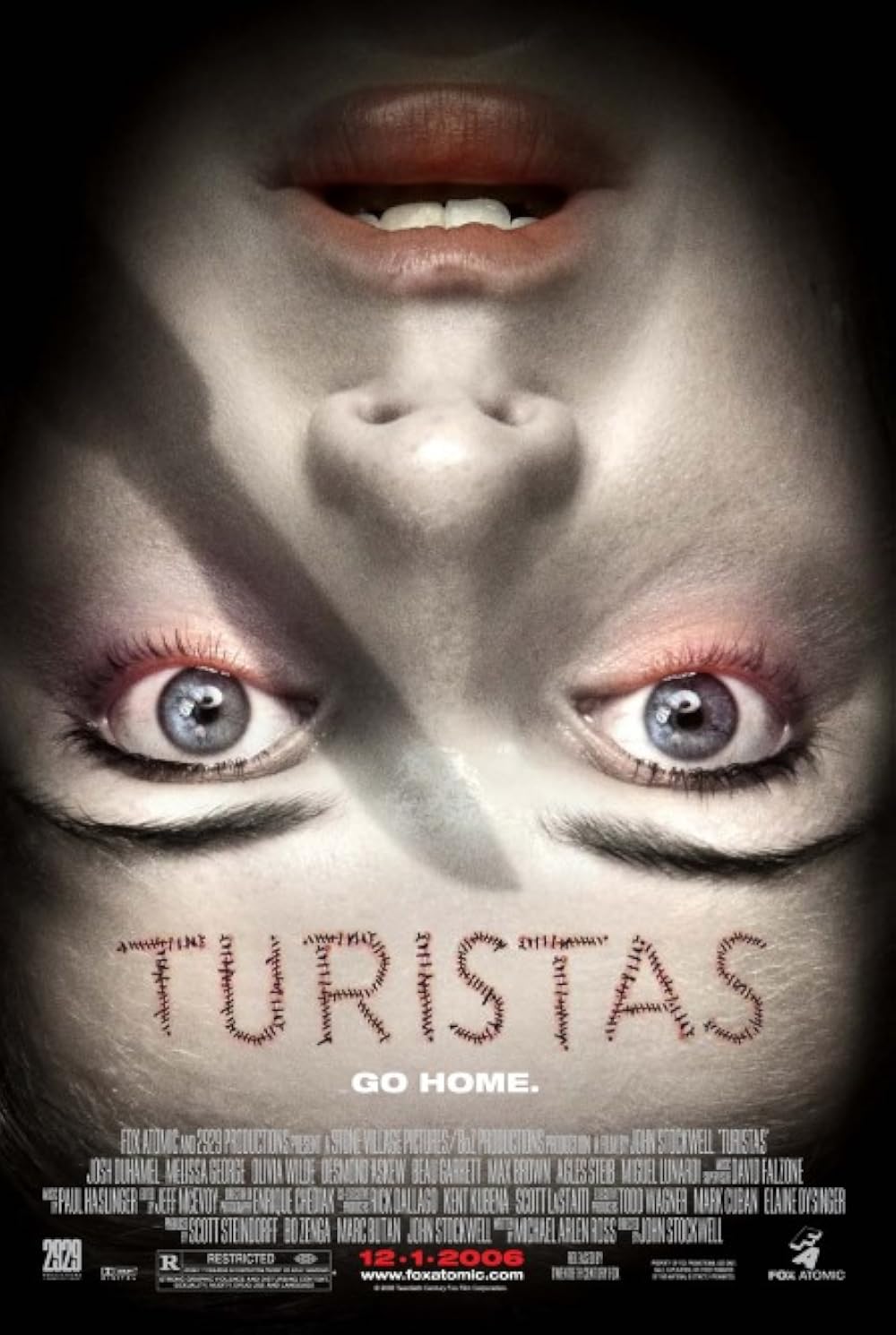

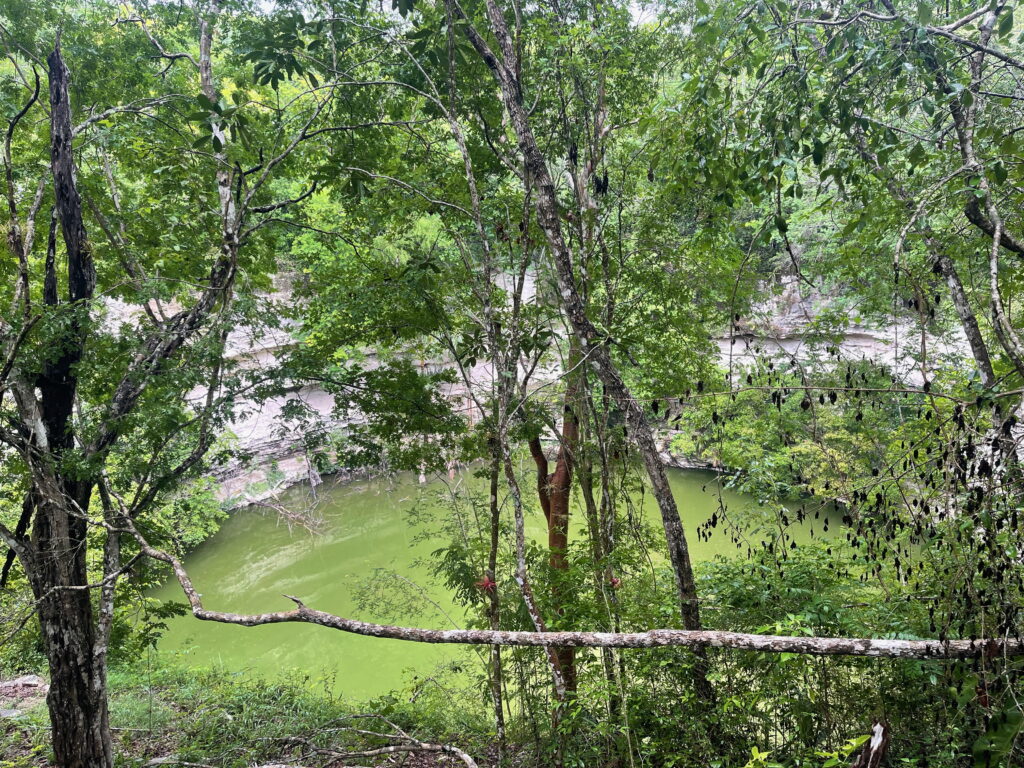

Leaving the US briefly we come up Turistas from 2006. Part of a spate of films seeking to capitalize on the formula of 2005’s Hostel, this film is about a bunch of American and British tourists vacationing in Brazil when their bus swerves off the road and careens down a mountainside. Everyone survives, but they sure are stranded. The backpacking turistas we mainly follow here are Olivia Wilde as the adventurous little sister of the overprotective Josh Duhamel and the world-wise Melissa George who teams up with them. While they initially find beachside fun to pass the time waiting for the next bus, the film quickly turns dark when they wake up after a night’s revelry with all their possessions and money stolen. Wandering around looking for help our crew eventually finds a young local with passable English who commits to take them to his uncle’s house in the woods for help. Red flag, tourists. This is a red flag. You might even call it a landing flare because shortly a helicopter — and the organ harvesting crew it carries — find them. You see this is all part of an organized criminal enterprise which, it is explained, seeks to provide transplants for needy Brazilians from the organs of exploitative gringos. They literally describe it as tourist “give back”. So we finally have souvenirs, except that it is the tourists who give them for one hell of a price. Turistas is a better film than it should be. It’s remarkably nuanced about the mistakes Americans make abroad and contains a pretty compelling sequence in the jungle where our backpackers try to outsmart the marauding surgeons in underground cenotes. Also it contains the classic line “Do you guys mind if I go topless?” By the prudish standards of early 2000’s horror this is a welcome wink to earlier eras — and it does not disappoint.

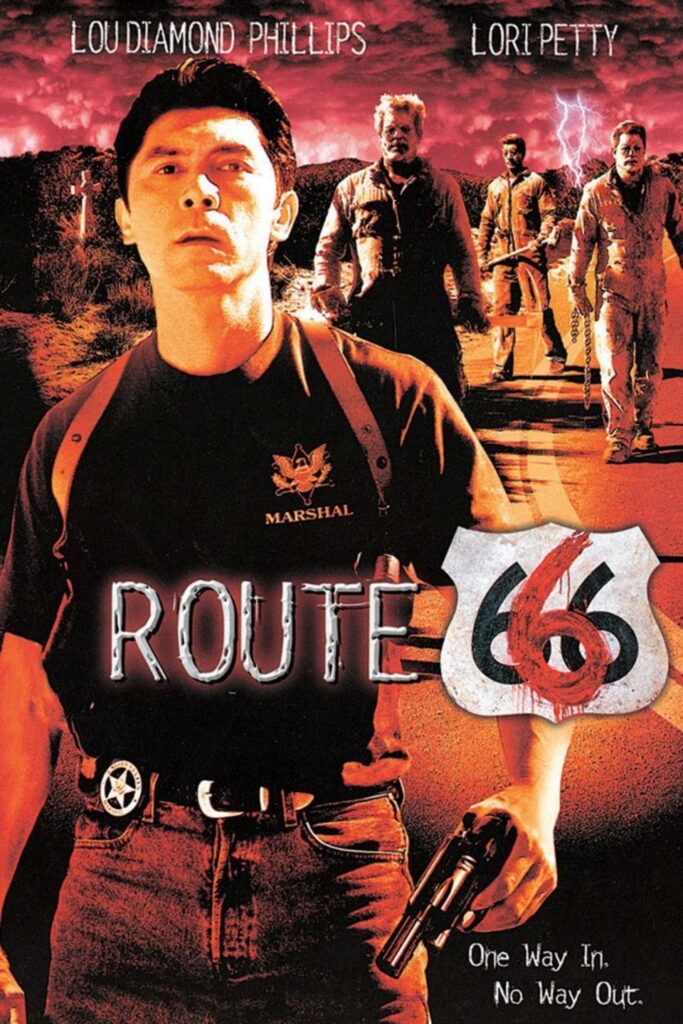

The last stop on our journey/lesson today is the 2001 action-horror Route 666. Starring Lou Diamond Phillips and Lori Petty as federal marshals transporting an informant across the American southwest to a trial date in LA. Fending off hitmen and having to deal with very unhelpful local authorities, the marshals learn of and eventually take a condemned road called Route 666, an offshoot of the famous Route 66, mostly now replaced by interstate highway (though which still exists in places). Route 666, we learn, was closed to travel after a prison road crew accident in the 1960s. This road crew of now-dead convicts haunts the road, manifesting as part ghost, part zombie and wielding all their old tools. There are only so many ways to be killed by a jackhammer or a steamroller, but tourists let me tell you, every one of these ways is a delight to watch. As a subplot we learn that one of the undead convicts is actually the father of Lou Diamond Phillips marshal character. It’s a warming father-son storyline that actually involves them helping each other out, wrestle match tag-in style. Oh also these convicts didn’t have an accident; they were murdered by the corrupt local authorities. There is comeuppance, naturally. It’s all ridiculous and very low-budget, but this film nails the setting — clearly it was filmed onsite in one of the myriad deserted roads that dot the American southwest. That’s half the spooky factor right there. I’d call this an action movie with horror elements rather than the other way around. But it’s also not really a tourist trap movie, despite its obvious nod to Route 66, the origin of so many traps in the first part of the 20th century. There are no tourists in this movie and the only thing trapped are the souls of the convicts from this cursed side-road.

Here’s the thing, my travelers. I would love to be proven wrong, but I don’t know that there is a horror movie that is precisely about tourist traps as we conceive of them. Such an opportunity! Imagine taking either of the definitions — a world of overpriced tschotkes and bland food as the setting for a film or a setting where there are just too many damn people trying to get at a singular sight. Except scary or gory or creepy. I would like to watch this movie, should it ever exist.

So consider yourself warned, travelers. You now know as much as I do about the perils of tourist traps. We will do our best to avoid them, but … hey wait is that a collection of rusty mufflers made into vaguely human shapes in that farm field? Only $40 to view? I’ll be right back.

Thanks for joining. Until our next itinerary!

A full list of the movies mentioned above can be found at Letterboxd. Find out where to watch there.

The Terror Tourist is my occasional segment on the Heavy Leather Horror Show, a weekly podcast about all things horror out of Salem, Massachusetts. These segments are also available as an email newsletter. Sign up here, if interested. The segment begins at 3:20 in this episode:

The Nightmares of Illinois

Special note: this is a post about terrible things that have happened in Chicago and how they have been represented on film. Terrible things are happening in Chicago right now in broad daylight with not a serial killer in sight. If you live in Illinois and are concerned about or have witnessed human rights violations contact the Illinois Coalition for Immigrant and Refugee Rights (ICIRR) Family Support Network and Hotline at icirr.org or 855-435-7693. They run regular trainings and an alert text network. They could absolutely use your help.

Prefer to listen to this post? (It’s really me, not AI!)

Greetings, travelers! I hope you’re ready for another itinerary of place-based frights. Today we’re heading to the land that made me in a segment called The Nightmares of Illinois.

Every state has examples of real depravity exhibited by real people. Illinois is of course not unique. But it is where I am from, which is all the qualification needed to be your tour guide. So let’s get walkin’!

If you wanted to rank states by some metric of horror you could look at total serial killings. California leads the pack by a mile, followed by New York, Texas and Florida. Which is exactly what you’d expect. These are our four most populous states. But there’s something about serial killers from the American Midwest that captures the public imagination — and serves as inspiration for movies — in a way that other regions do not. For example, some of the most classic cinematic horror of the 20th century comes from the gruesome exploits of the grave-robbing skin-wearing murderer Ed Gein. Psycho, The Texas Chainsaw Massacre, The Silence of the Lambs — all from Gein, at least in part. And then there’s Jeffrey Dahmer, Milwwaukee’s most infamous cannibal predator. But that’s up in Wisconsin. Let’s travel about 89 miles south.

Chicago may be the origin for the idea of a serial killer. Or at least the earliest documented archetype of the behavior of what we’d later call a serial killer. Those of you who have read Erik Larson’s The Devil in The White City will know H. H. Holmes, pseudonym of a man who never met a category of crime he did not undertake: fraud, forgery, bigamy, horse thievery, and of course murder. Lots of murder. He confessed to killing 27 people — many of which have been disproven, but others are likely to which he did not confess. Part of the Holmes’ notoriety comes from his “Murder Castle”, a building on the south side of Chicago used to house visitors for the World’s Columbian Exposition held there in 1893. Contemporary journalists reported that Holmes asphyxiated his victims with gas lines, suffocated them in sealed vaults, and tortured them with various medical experiments. Many of these methods seem questionable based on modern research which says as much about journalistic sensationalism and the public’s fascination with it as it does about Holmes’ pathological inability to tell the truth. But H. H. Holmes was a sadistic killer — that much is indisputable. He was eventually caught, tried, and executed. In a possibly poetic ending his death by hanging did not come from a broken neck but rather slow, twitching asphyxiation after dangling from the gallows for twenty minutes.

Now, my tourists, if you were a visitor to the Columbian Exposition at this time and you craved the best encased meats Chicago could provide you would have headed 12 miles north to find Adolph Luetgert, the “sausage king of Chicago” and his factory. Hope you got a nice brat during the time of the Expo because in 1897 Luetgert was convicted of killing his wife and dissolving her in one of his sausage vats filled with lye. Rumors abounded that he ground up what remained as sausage and sold her off. This was not true, though it did demonstrably depress sausage sales in Chicago for some time. (Sidenote: remember that scene in Ferris Bueller’s Day Off where he claims to be Abe Froman, Sausage King of Chicago? No relation.)

Mail-order catalogs were all the rage at this time, having been invented by Montgomery Ward in Chicago in 1872 followed soon by Spiegel and Sears & Roebuck. This may have been the inspiration for the mail-order murders of the first female serial killer of our journey, Belle Gunness — known as Hell’s Belle in the press. Belle, a former butcher, collected her victims by placing marriage ads in Chicago newspapers. Interested suitors would be lured to her farm across the border in Indiana. And they would never return. Belle seemed mostly interested in the men’s belongings and wealth, which she would keep after dismembering them and burying them all around her property. Once discovered it was determined that due to the amount of body parts positive identification and even a total victim count would be impossible. It is thought Gunness died in a fire she ordered her farmhand to start in order to kill her children, though the headless corpse said to be hers was 5” shorter and 50 pounds lighter than Gunness. She never definitively reappeared though, despite rumors of escape.

Many murders have claimed to be the Crime of the Century (and most of these end up spawning numerous documentaries and films), but one of the first may be those committed by Nathan Leopold and Ricard Loeb, two students at the University of Chicago in 1924. Motivated by gross privilege and Nietzsche’s concept of Übermenschen, Leopold and Loeb devised a plan to commit what they considered the perfect crime. This ended up being the kidnapping of 14-year-old Bobby Franks as he walked home from school. TLDR; this was not the perfect crime. Leopold and Loeb murdered the child, attempted to disguise his identity with acid, and dumped him in a culvert. But they weren’t done. Part of their perfect crime included providing misdirection by sending a ransom note to Franks’ family. Through some remarkable forensics for the era a pair of eyeglasses found by the body was determined to be Leopold’s based on the uniqueness of its hinge mechanism. Also a typewriter used for the note was fished from a lagoon nearby and linked to the crime by analyzing its strike patterns. The boys confessed. At trial they were represented by super-lawyer Clarence Darrow of Scopes Monkey Trial fame who succeeded in obtaining 99 year sentences instead of execution. Jailhouse justice being what it is Loeb was murdered by a fellow inmate in 1936, purportedly after offering sex. The Chicago Daily News is said to have run the following headline which somehow did not win a Pulitzer: “Richard Loeb, despite his erudition, today ended his sentence with a proposition”. Leopold died a free man, paroled after 33 years. Boo.

Creepy messages written on mirrors in lipstick? Yeah, that’s Illinois too. Travelers, meet William Heirens, the “Lipstick Killer” of the suburbs. Starting in 1945 Heirens would break into women’s apartments, murder them apparently for the sheer thrill of it. He nom-de-guerre came about from a strangely-capitalized line scrawled on his second victim’s mirror: “For heAVens SAKe cAtch me BeFore I Kill More I cAnnot control MyselF.” This may be the first killer fulfilling the modern clinical definition of serial killer: someone pathologically driven to murder with no apparent motive. The tale of Heirens is mostly a legal one as he was eventually caught, confessed multiple times, and ultimately recanted. He died as Illinois’ longest-serving prisoner having spent 65 years in the clink, always petitioning for clemency or parole. Something I find humorous is that, having taken nearly every course available to inmates (and being the first Illinois prisoner to earn a four-year college degree) authorities forbade him from taking a course in celestial navigation — as if he was going to sail his way out of prison, Magellan-style.

A personal digression, if you will permit me. On the night of July 13, 1966 Richard Speck broke into a dormitory housing student nurses. He killed eight women that night, using only a knife. A ninth escaped by hiding under a bed. Also in July of 1966 my mother was a student nurse, though not with the same program and thankfully not involved in Speck’s atrocity. After the murders but before he was convicted, nurses throughout Chicago were assigned security guards to walk them from their hospitals to their cars or the subway. My mother befriended her guard who eventually invited her to a party he was throwing. She and her girlfriends showed up to find my father splayed out on a dining room table, presumably drunk but certainly having a good time. Thus began the relationship that would become a marriage and bring me into the world six years later. So … thanks Richard Speck? Still, if there’s a hell I hope you’re rotting in it. Music nerd sidenote: Cheap Trick’s first album contains a song about Speck called “The Ballad of TV Violence (I’m Not the Only Boy)”. And before you ask, yeah, that whole album is dark. Cheap Trick got lighter as time went on.

And now we arrive at our final destination, the clown-artist-monster John Wayne Gacy. Let’s make this a short visit as these crimes turn my stomach in a way that the others do not. And maybe this is because Gacy is a core childhood memory. I was old enough to follow the news by the time his crimes (and victims) were uncovered. And I was about the same age as most of them. Gacy killed at least 33 young men after raping and torturing them. 26 of these victims he buried in the crawlspace of his home just outside of Chicago. He also performed as a clown at various performances throughout the suburbs. So if clowns were not already terrifying to young me they became so when I learned this. John Wayne Gacy was convicted and eventually executed on May 9, 1994 — two days after I graduated from college. The only good to come from this devil: his crimes were the motivation behind the removal of a waiting period before law enforcement could begin the search for a missing child. Other states followed Illinois’ lead and would eventually link up efforts in a national network for locating missing children.

So that’s one particular kind of horror from Illinois. There are plenty of others that don’t involve murder. Interested travelers should take side trips to explore the sinking of the S.S. Eastland, the Iroquois Theatre fire, and The Great Chicago Fire. But here we turn briefly to movies. While Chicago ranks pretty high in the unenviable serial killer sweepstakes it ranks comparatively low for its population in the list of horror movie settings, #9 out of 50. (You’re telling me there are more horror movies set in New Jersey? Wait, that makes sense.)

Probably the most important horror movie set in Illinois is the original Halloween from 1978. Located in the fictional, presumably downstate town of Haddonfield, this film does a pretty good job of presenting semi-rural Illinois. I mean, minus the unstoppable killer. Most semi-rural towns in Illinois lack unstoppable killers. Except those discussed above. And even those were eventually stopped. Notably, Halloween was not filmed in Illinois — as the mountains you can see in the distance in some scenes will prove. This is the source of “the mountains of Illinois” TV trope that is well-documented.

Child’s Play from 1998 finds a Chicago detective pursuing a serial killer into a toy store. The killer is shot but not before transferring his soul via voodoo into a nearby doll. And thus was born a seven-film franchise, a TV series, and a reboot. The original film was fun. Brad Dourif as the voice of Chucky sells the whole conceit. But it doesn’t have a ton to do with Chicago except maybe the idea that there are enough serial killers running around that one of them might conceivably know voodoo and die in a toy store?

In my opinion the quintessential Illinois horror has to be Candyman from 1992. Shot in the real-life horror of Chicago’s despicable approach to public housing known as Cabrini-Green, it deftly weaves street-level verisimilitude, urban legend, and folklore. Come for the scenes of a Chicago near north side just before gentrification; stay for the exceptional performances of Tony Todd and Virginia Madsen.

The Relic from 1997 uses Chicago’s Field Museum of Natural History as the setting for what I will call anthropology horror. Basically this a creature-in-the-crate story like so many before it, but set almost entirely inside the museum. It’s an under-loved film in my opinion, featuring a decent monster and wonderful performances by Penelope Ann Miller, Tom Sizemore, and Linda Hunt. And the only reason it is set in Chicago is because New York’s American Museum of Natural History refused to let it be filmed there. Your loss, New York City!

Lastly, I must note Poltergeist III from 1988 not because it is a good movie — because it is not a good movie — but it is one of the few horror films I know of that takes place almost exclusively in a skyscraper anywhere. By the third film the idea of a house spirit was getting old, which I understand, but to this day I chuckle to think of someone proposing the idea of transplanting it to a luxe condo in the sky. Poltergeist III was filmed on location in the John Hancock building, using both its interiors and exterior scaffolding — real stunts — for effect.

Look, my travelers, there are plenty of other frights to explore in Illinois. If interested check out Something Wicked This Way Comes (1983), Henry: Portrait of a Serial Killer (1986) , Flatliners (1990) , The Unborn (2009) and The Rite (2011).

So that’s it for today. Hope you enjoyed this tour through the Land of Lincoln. Only 48 more states to go for this Terror Tourist. Thank you!

A full list of the movies mentioned above can be found at Letterboxd. Find out where to watch there.

The Terror Tourist is my occasional segment on the Heavy Leather Horror Show, a weekly podcast about all things horror out of Salem, Massachusetts. These segments are also available as an email newsletter. Sign up here, if interested. The segment begins at 16:30 in this episode:

Maybe Shark

Prefer to listen to this post? (It’s really me, not AI!)

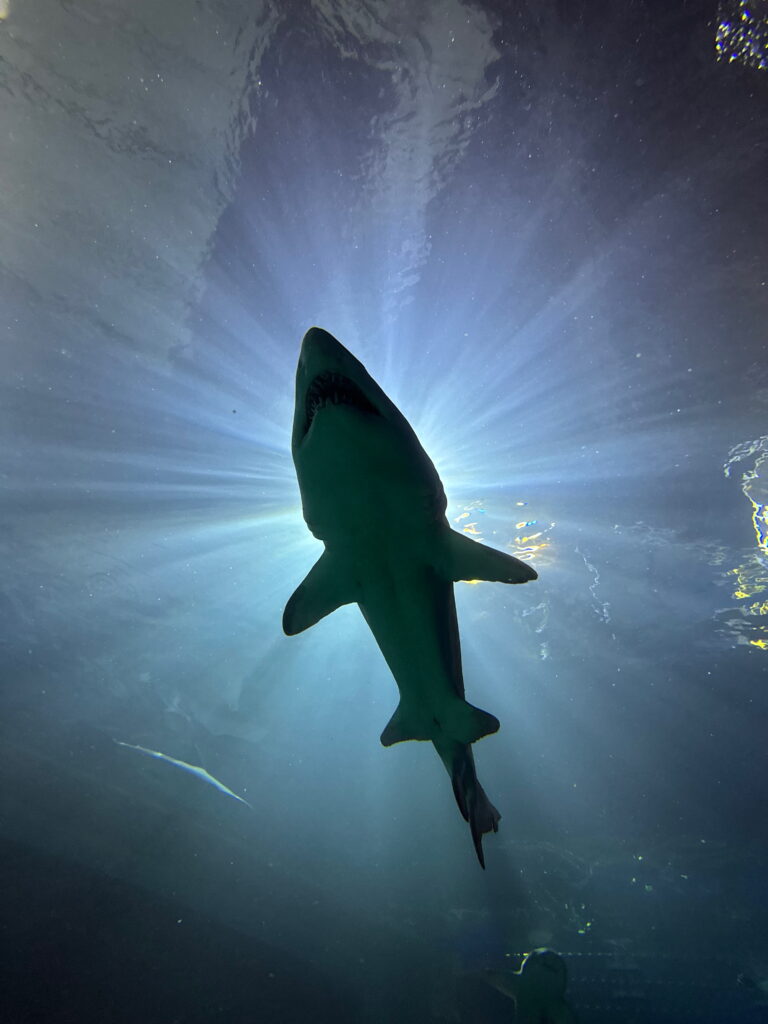

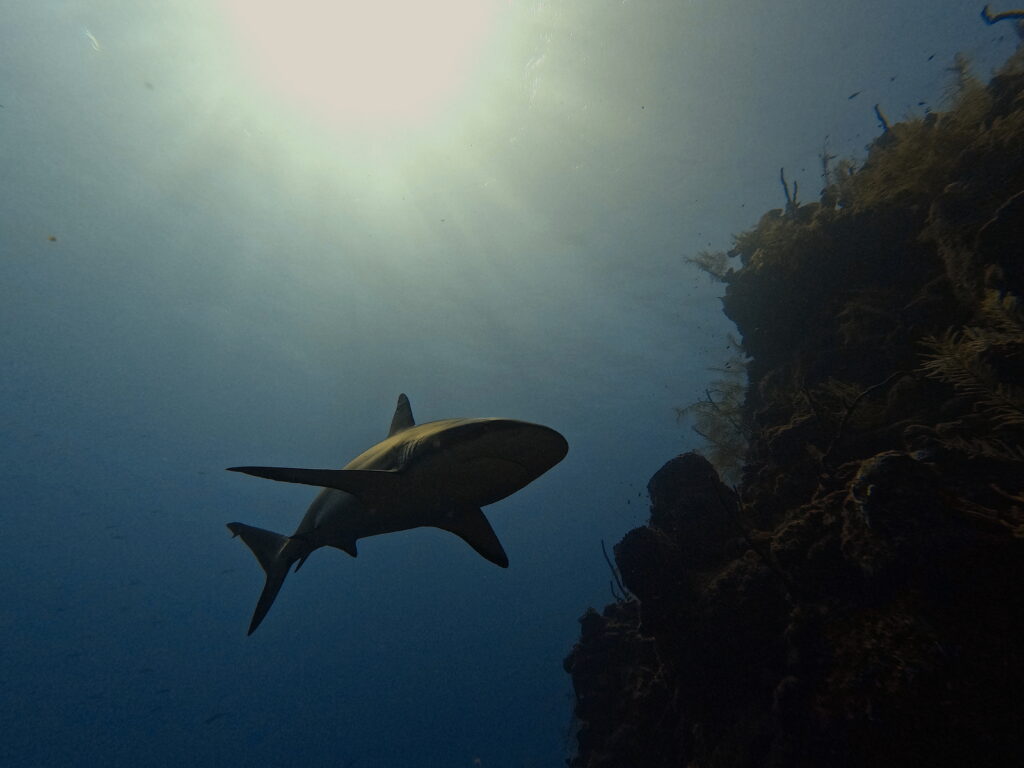

Greetings, travelers! Grab a towel, maybe a snorkel too. This week we’re returning to a destination you may recall from last year’s itinerary called Doom Shanty — the horrors of the underwater world. But as seasoned visitors on this second trip we’re focusing on a specific threat. This death from below is almost as old as natural threats get. It was swimming in the waters of our planet before the land had trees. I speak, of course, of the diverse group of apex predators we call sharks. On this 50th anniversary of Jaws, a movie which changed movie-going and some people’s views of the ocean forever, let’s look at the evolution of sharks as the thing we fear in movies in a segment called Maybe Shark (doo doo doo-doo doo-doo).

Sharks have been around for almost half a billion years. As reference Homo sapiens — modern humans — have been around a short 300,000 years. Sharks are really good at what they do, which is almost constantly eating, sometimes reproducing, and mostly not being killed by other hungry things in the ocean. This is why they are sometimes called nature’s perfect creatures. And yet, to call sharks’ evolution perfect is to presume some sort of direction towards perfection. This is a mistake, as evolution has no direction — it’s just a series of random genetic fuck-ups that sometimes yield cool new ways of not dying. Sharks have been remarkably successful in adapting to changing conditions — everything from competition for food to global extinction events.

And what accounts for this success? Let’s drop below the waves in this sturdy cage and have a closer look. Sharks are fish, but importantly — and weirdly — they are mostly boneless. Their body structure is kept relatively rigid by tissues made of cartilage (what human ears and nose tips are made of). Being boneless makes sharks more buoyant. Lucky for us, even this cartilage fossilizes to some degree which is how we know anything at all about the most ancient sharks. The skin of a shark is composed of thousands of tiny teeth-like structures called dermal denticles, which is why it feels like sandpaper. Put another way, sharks are literally covered nose to tail in teeth.

Sharks have phenomenal eyesight and an extraordinary sense of smell (they can detect odor molecules in dilutions as low as ten parts per billion). But sharks have a literal sixth sense too — they can detect electrical impulses in the water. Organs with the badass name of the Ampullae of Lorenzini form a network of mucus-filled pores that are both electroreceptors and magnetoreceptors. Why is this interesting? These ampullae are so sensitive that they can detect the electrical stimuli from the muscle contractions of potential prey. Too dark to see that tasty seal in the water column? No problem, sharks can feel their victims’ electrical presence. It’s almost magical. Having organic magnets is useful too. And this is why sharks can migrate ridiculously long routes around the planet without problem. The ampullae of Lorenzini are a built-in GPS system.

You’ve probably heard that sharks need to keep swimming or they will die. This is mostly true since, without lungs, it is the flow of oxygenated water through their gill plates that allows for respiration. But it isn’t completely true. Some bottom-dwellers, like angel and nurse sharks, have an extra organ than manually pulls water in over their gills so they can stay at rest for long periods of time. Which is why scuba divers will often encounter a shark just sitting there in a crevice. It’s momentarily alarming, sometimes terrifying, even if these species are completely harmless to humans.

So, yeah, sharks are adaptable. But it doesn’t end there. Have you heard of intrauterine cannibalism? Just what it says on the tin: shark sibling embryos that eat each other inside the uterus of their mother. (I must have missed the verse in the song Baby Shark on this.) How on earth is this a good thing? Turns out, sand tiger sharks only ever produce two pups — one from each uterus. This is far smaller than the normal litter of shark babies which for other species is at least a dozen. Baby sharks are small of course, which makes them prey, so the larger the litter size the better the chances some of them will survive. But with only two pups the sand tiger shark has the odds stacked against it. Unless the babies come out of the womb as seasoned killers already, that is! Not only do the cannibal embryos get a headstart learning the taste of blood from their unborn brothers and sisters, but statistically this is survival of the fittest — with only the best killers coming out into a world ready to climb up the predator chain quickly. There is video of intrauterine cannibalism. It is alien and disturbing and gross and completely awesome.

One note before we open this cage and soil our wetsuits. I mentioned above that one of the hallmarks of sharks’ longevity is that they are mostly not being killed by other hungry things in the ocean. They are certainly being killed by things on the ocean and that’s us, human beings. Mostly this is purely economic: humans kill 100 million sharks annually, mostly for the value of their fins, meat, skin, and liver oil. Many more are killed as bycatch when fishing for other things. But we’re also destroying their ecosystem as habitat is contaminated by toxic run-off, ripped to shreds by bottom trawling, or simply denuded of prey because ocean temperatures are rising past levels where fish can survive. More than a third of all shark species are facing extinction.

There’s another reason humans are a threat, though, and that has less to do with the actual killing of sharks and more with our attitudes towards them. The vilification of sharks is a full-blown industry, as the films we are about to discuss exemplify. With the exception of Pacific Islander cultures, the modern view of sharks has almost universally been one of fear and malevolence. We fear sharks thus we do not value them. This is a problem because an ocean without sharks is even scarier than an ocean with them. Without sharks, the global food web — a web that leads to your dinner plate and far beyond — would unravel. Entire ecosystems would collapse as prey species would overpopulate, herbivores would run amuck decimating coral reefs and seagrasses, making way for an explosion of algae all over everything. This would noticeably decrease oxygen production globally, making room for even more carbon dioxide in the atmosphere, hastening global warming. And we all know how that’s going. So, yeah, sharks are scary, but we need them if we want this third rock from the sun to continue to be habitable at all.

OK, the cage is unlocked. Find your dive buddy and let’s swim out slow and orderly. We’re gonna explore the history of sharks in horror movies.

Holy mackerel there are a lot of films about sharks! Far too many to watch — or want to watch. Lotta chum in these waters. But there are some useful ways of separating The Shark Cinematic Universe into categories. First, shark films are a good case study for discussing the differences between thrillers and horror movies. Often these two genres intermix. Sometimes thrillers have horrific elements. The best horror keeps you on the edge of your seat like a good thriller. But they are not the same. Horror’s engine is fear. A thriller runs on suspense. And this is why there are so many shark films that are not horror. For the most part Jaws from 1975 is the line demarcating the shift from shark thrillers to shark horror. (More specifically, that precise transitional moment was probably when young Alex Kintner gets torn apart on his raft.)

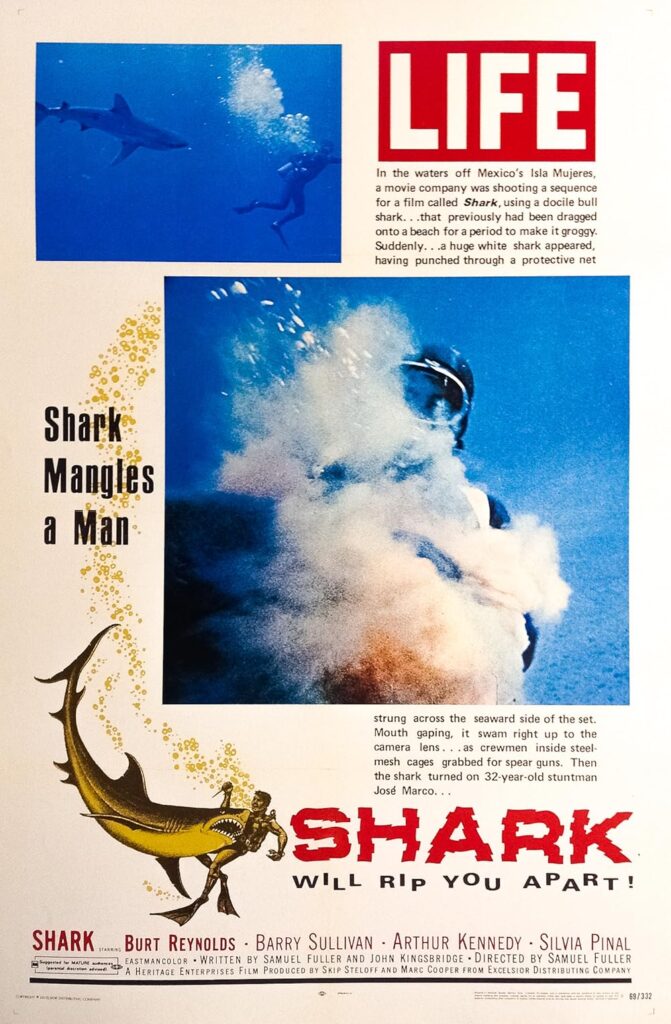

Pre-Jaws films with sharks were mostly adventure flicks. White Death (1936), filmed by Zane Grey as a fishing trip side-project, is basically a Western set on the ocean. The Sharkfighters (1956) is a fictionalized after-story of the sinking of the USS Indianapolis during WWII — the tale of shipwrecked sailors being ravaged en masse by sharks which you may recall that Quint famously recounts in Jaws. The Sharkfighters is notable for being one of the first films to use footage of real tiger sharks rather than puppets or dummies. Then there’s Shark (sometimes Shark!, 1969) starring Burt Reynolds and Silvia Pinal. Filmed in Mexico pretending to be Sudan it is mostly agreed that this film was an excuse for Reynolds and director Sam Fuller to party in beach towns. This movie has all the early tropes of ocean thrillers — unscrupulous treasure hunters, brave Westerners surrounded by caricatured locals, and the thought of sharks being in the water as all the tension needed. In a kind of snuff film-esque twist, it was claimed that a stunt diver was killed by a shark during filming. Life magazine did a photo spread of stills purportedly captured on film. After an investigation the magazine corrected itself, calling the story a hoax that involved a dead or drugged grey shark and “lots of ketchup”. But that didn’t stop the producers from marketing the film based on the supposed stuntman death. Maybe it was the success of this gruesome marketing that was the true harbinger of the turn towards horror just six years later.

Do we really need to talk about Jaws? Who has not seen this movie? OK fine, scaredy-pants. But who has not felt the cultural impact of this movie? No one. Usually listed as a thriller — and I can see this argument — it is undeniable that the result of Jaws was pure fear. To this day there are people of a certain generation who will not swim in the ocean because of this single movie. Only the second feature film of young director Steven Spielberg, Jaws was a notoriously difficult movie to make well — from the instability of filming at sea to the mechanical shark named Bruce to Robert Shaw battling alcoholism on-set. But made well it was, so well that it created an entire new category of movie, one whose lines of ticket-seekers wrapped around movie theater blocks, effectively “busting” them. The blockbuster became the new Hollywood business model: the pursuit of high box office returns from heavily-advertised action and adventure films released during the summer. For our purposes what Jaws proved is that there clearly was a market for being scared by sharks.

Sidenote: the main shark theme music — a could-not-be-simpler alternation of two notes one half-step apart — may be the most recognizable piece of music in the entire history of film. Composer John Williams described the theme as “grinding away at you, just as a shark would do, instinctual, relentless, unstoppable.”

You know what else is unstoppable? Film studios’ pursuit of money. After Jaws there was an absolute frenzy of shark movies looking to take a bite out of the market. Jaws itself had three sequels, the first of which was decent. The other two we shall not discuss. The 70s and 80s were awash with knock-off shark flicks: Mako: The Jaws of Death which was first on the bandwagon in 1976 and at least spices up the plot with a man who achieves some telepathic connection with sharks from a shaman’s medallion and Shark Kill, also from that year, which is just made-for-TV slop. Oh but then Mexico got in on the action releasing Tintorera (1977) — we’ll come back to this one, Cyclone (1978), and Bermuda: Cave of the Sharks (also in 1978).

Hang on, don’t forget the Italians, next in line (such as Italians know how to form a line — I can say this, as I am Italian). Spending exactly one month in theaters in 1981 before being sued into oblivion by Universal was Great White (also known as The Last Shark) starring Vic Morrow who would be decapitated by a helicopter stunt gone horribly wrong on the set of Twilight Zone: The Movie less than two years later. Courts would rule that Great White was exactly the same film as Jaws. Which may be, but I found it to be a great watch. None other than giallo maestro Lucio Fulci was a fan of the aforementioned Tintorera. He wasn’t a big enough fan to approve a zombie versus shark scene suggested by his producer for his film Zombi 2 (aka Zombie Flesh Eaters), but that didn’t stop it from being filmed by his special effects lead Giannetto De Rossi inside a large saltwater tank with a recently-fed and heavily-sedated shark. Eventually Fulci agreed to include the now-infamous (and ridiculous) sequence. But here’s the interesting part: a local Mexican diver and photographer named Ramón Bravo was hired to fill in for the stunt man as the underwater zombie fighting the actual shark. Ramón Bravo wrote the novel Tintorera upon which the movie was based! Full circle.

So that’s the immediate before and after Jaws. First, sharks as thriller plot sidepieces shot poorly without color correction (or using stock footage). Then Jaws. Then a whole bunch of not-great derivative films coming from the usual suspect countries. That’s only the beginning though. Things start getting really good again — or at the very least, more interesting — around the turn of the millennium. This is largely due to what I consider the second best shark horror film ever made, Deep Blue Sea. Directed by Renny Harlin and released in 1999 this film stars Thomas Jane, Saffron Burrows, Samuel L. Jackson, and LL Cool J. Which, c’mon, that’s one hell of a cast. The premise here is basically what if we made a killer shark movie more like a slasher film? You may be wondering, how would you even do that? First, genetically enhance your sharks in a secret research facility. Make ‘em smarter and more cunning. Then partially flood the facility so the sharks can move about its corridors, but humans can still run and move through the same corridors. Voila! Slasher shark film! You may think that there was nothing redeeming about James Cameron’s Titanic from 1997, but you would be wrong, as the sets for Deep Blue Sea were constructed atop the massive water tanks that had been built in Baja California for Titanic. Its heart — or at least its massive tanks — did go on. Deep Blue Sea is a fun film, a scary film, and a pretty novel one at that. The baddies here aren’t the sharks so much as the people who modified and experimented on them. Sharks as antagonist but not as villain; sharks as bullet but not the gun. This is a tonal shift we will see again. More than anything Deep Blue Sea jumpstarted shark films again, yielding a whole lotta awful — basically the second wave of sharksploitation — interspersed with some pearls.

It took about a decade to really get going, but honestly the sheer amount and at least conceptual creativity of the shark films about to be unleashed on the public is impressive. Let’s run down a few:

- In Sharktopus (2010) there’s only one reason the US Navy would engineer a half-shark, half-octopus creature for combat. It’s so that we could look back on the relative sanity of this hybrid once the sequels were released. Please enjoy Sharktopus vs. Whalewolf and Sharktopus vs. Pteracuda.

- The premise of Sharknado (2013) starts with a tornado that scoops up and throws ravenous sharks all over town. And that’s the least ridiculous of the premises of the five Sharknado films which, like all horror series that have run their course, eventually goes to outer space.

- Ghost Shark (2013) solves a problem with sharks, namely that you can only be attacked by one if you are in a large body of water. This movie is hilarious. The slightest amount of water, even just a puddle, can unleash Ghost Shark!

- Please read this movie title a few times: Sharkansas Women’s Prison Massacre (2015). This film delivers everything (except an enjoyable experience): a fracking mishap, giant prehistoric sharks, female prisoners on a work detail in a swamp, and Traci Lords playing a detective for some reason.

- As a fan of Christmas horror, I watched Santa Jaws (2018). My strong advice to you, travelers, is not to watch this. The gag of a Santa hat being worn atop a shark dorsal fin slicing through the water is mildly humorous precisely one time.

- Midwest represent! Sharks of the Corn (2021) is exactly what it sounds like — and should not be.

- Nothing stirs a sense of patriotism like Americans fighting unkillable sharks launched to the moon by the Soviets years ago. Please salute Shark Side of the Moon (2022).

As you can see from these films sharks have become bolt-on accoutrements to almost the entire range of horror tropes and sub-genres. Monster movies, ghost flicks, religious horror, space frights, and then just straight up replacement of killers from previous films with … sharks. Is this lazy? Sure. Is it fun? Usually! In 75 years sharks have gone from representing the threat of the unknown, to evil killers, to placeholders for whatever situation needs more menace. We said it before at the beginning of this journey, but it probably bears repeating: sharks have been around forever because they are almost infinitely adaptable.

Travelers, I know what you are thinking: there are so many shark horror films — and most of them are terrifically bad. Can’t you please provide a smaller map of the best destinations? Sure, can do.

Open Water (2003) — Based on the true story of two scuba divers left behind when their dive operator doesn’t perform a proper headcount, the horror here is more the terror of being alone in the sea. Only later do sharks do what sharks do best. As for what really happened to these divers we will never know. Maybe they died well before any sharks showed up. Maybe there were never any sharks at all. All we do know is that those divers did not live.

The Reef (2010) — Based super loosely on a true story where two people were killed by sharks, this film uses real sharks superbly edited to tell the tale of a group of folks trapped on a capsized yacht. A very similar premise to Open Water. The characters here just have the hull of a boat to cling to instead of bobbing around in the waves. The result is the same ultimately.

Bait (2012) — Australians know how to do sharks (as you might remember from the recent grotch Dangerous Animals). Bait takes place almost entirely inside a flooded grocery store next to a beach. If you liked the everyday confines of the movie The Mist and sharks in corridors like Deep Blue Sea you’ll love this.

The Shallows (2016) — Starring Blake Lively … and a shark. And that’s really it. What’s incredible about this film is how a single person trapped on a rock with a Great White shark circling around makes for a compelling film. But it very much does.

47 Meters Down (2017) — Stuck in a cage at the very limits of recreational diving (and nitrogen narcosis) this is The Shallows with one extra character and a giant twist at the end. Obviously there are sharks, but there’s also the overriding dread of an ever-diminishing air supply to quicken the pulse.

Under Paris (2024) — I found this more compelling than the Olympics opening ceremony it meant to prefigure (which also took place on the Seine). Under Paris is actually a really fun movie with flooded catacombs, mutant sharks, and a triathlon stand-in for the Olympics that becomes a chomping smorgasbord.

There are so many others. You could watch one shark horror film every week for a year and probably not run out. I do not advise doing this.

Let’s conclude this week’s journey with a quote from a recent New Yorker piece on what we really have to fear about sharks:

There is no such thing as shark-infested waters, in the same way that there is no such thing as a child-infested school. You cannot infest your own home. Fear is, of course, a great good. It can be a form of wisdom. But if we could reorient the sentiment—and direct it, for instance, toward those humans whose vested interests lie in persuading us to acquiesce in the living world’s destruction—we would fare better. Beware an ExxonMobil-infested State Department; beware a fossil-fuel-infested politics. These are dark times, and there are many things to fear. But none of them are found swimming under a vast sky as the waters around us warm and empty.

That’s it, my waterlogged travel-mates. You can surface now, but carefully. Stay vigilant out there, but I really wouldn’t worry about sharks. Until next time, I am your faithful tour guide.

A full list of the movies mentioned above can be found at Letterboxd. Find out where to watch there.

The Terror Tourist is my occasional segment on the Heavy Leather Horror Show, a weekly podcast about all things horror out of Salem, Massachusetts. These segments are also available as an email newsletter. Sign up here, if interested. The segment begins at 22:40 in this episode:

Land of the Shtriga

Prefer to listen to this post? (It’s really me, not AI!)

It’s been a while since our journey took us to an actual place, like a country. Let’s get back to our roots here.

What makes something a horror representative of a nation? It can’t be merely that the country is used as a shooting location. If it were there would be hundreds of films we could consider Hungarian, Croatian, or Slovakian horror. Sometimes I think every third person on the street in Budapest is a film fixer. But that’s not how it works. To be a national horror generally requires either of these two characteristics (and the best have both): 1) majority cast and crew from the country — bonus if it is the director, writer, and lead actors and/or especially 2) the story centers on regionally- or nationally-specific fears, concerns, and/or cultural narratives. Very few filmmakers set out to make a national horror, though some do — looking at you A Serbian Film. But many use a region’s cultural memory of folklore or legend to make something unique.

So where are we going? If you’re coming from the USA, cross the Atlantic, and head to southern Europe. You can grab an espresso in Italy, but keep going. Cross the Adriatic, wave to the pretty dogs on the Dalmatian coast but do not stop — move southeasterly. Hit Greece? Too far. Back up. Our destination today is the incredible country of Albania in an installment called Land of the Shtriga.